Imagine waking up to the smell of smoke, heart racing as you ensure your family’s safety. Early detection is crucial, and “Flame Guardian,” a deep learning-powered fire detection system, aims to make a life-saving difference. This article guides you through creating this technology using CNNs and TensorFlow, from data gathering and augmentation to model construction and fine-tuning. Whether you’re a tech enthusiast or a professional, discover how to leverage cutting-edge technology to protect lives and property.

This article was published as a part of the Data Science Blogathon.

In recent times, the Deep Learning has revolutionized colorful fields, from healthcare to finance, and now, it’s making strides in safety and disaster operations. One particularly instigative operation of Deep Learning is in the realm of fire discovery. With the adding frequency and inflexibility of backfires worldwide, developing an effective and dependable fire discovery system is more pivotal than ever. In this comprehensive companion, we will walk you through the process of creating an important fire discovery system using convolutional neural networks( CNNs) and TensorFlow. This system, aptly named” Flame Guardian,” aims to identify fire from images with high delicacy, potentially abetting in early discovery and forestallment of wide fire damage.

Fires, whether wildfires or structural fires pose a significant threat to life, property, and the environment. Early detection is critical in mitigating the devastating effects of fires. Deep-Learning based fire detection systems, can analyze vast amounts of data quickly and accurately, identifying fire incidents before they escalate.

Detecting fire using Deep Learning presents several challenges:

The dataset used for the Flame Guardian fire detection system comprises images categorized into two classes: “fire” and “non-fire.” The primary purpose of this dataset is to train a convolutional neural network (CNN) model to accurately distinguish between images that contain fire and those that do not.

You can download the dataset from here.

First, we need to set up our terrain with the necessary libraries and tools. We will be using Google Collab for this design, as it provides a accessible platform with GPU support. We’ve formerly downloaded the dataset and uploaded it on drive.

#Mount drive

from google.colab import drive

drive.mount('/content/drive')

#Importing necessary Libraries

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

import plotly.express as px

import plotly.graph_objects as go

from plotly.subplots import make_subplots

import os

import tensorflow as tf

from tensorflow.keras.preprocessing import image

from tensorflow.keras.preprocessing.image import ImageDataGenerator

#setting style grid

sns.set_style('darkgrid')We require a dataset with pictures of fire and non-fire scripts in order to train our algorithm. A blank DataFrame and a function to add images from our Google Drive to it will be created.

# Create an empty DataFrame

df = pd.DataFrame(columns=['path', 'label'])

# Function to add images to the DataFrame

def add_images_to_df(directory, label):

for dirname, _, filenames in os.walk(directory):

for filename in filenames:

df.loc[len(df)] = [os.path.join(dirname, filename), label]

# Add fire images

add_images_to_df('/content/drive/MyDrive/Fire/fire_dataset/fire_images', 'fire')

# Add non-fire images

add_images_to_df('/content/drive/MyDrive/Fire/fire_dataset/non_fire_images', 'non_fire')

# Shuffle the dataset

df = df.sample(frac=1).reset_index(drop=True)Visualizing the distribution of fire and non-fire images helps us understand our dataset better. We’ll use Plotly for interactive plots.

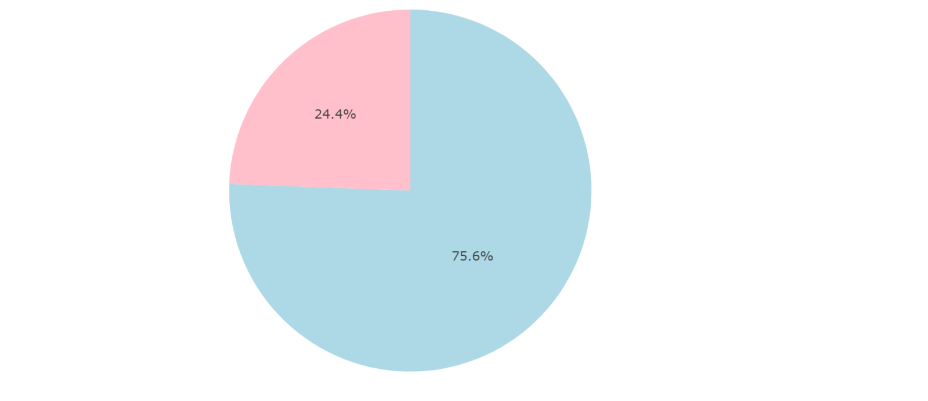

Let us now create a pie chart for image distribution.

# Create the scatter plot

fig = px.scatter(

data_frame=df,

x=df.index,

y='label',

color='label',

title='Distribution of Fire and Non-Fire Images'

)

# Update marker size

fig.update_traces(marker_size=2)

fig.add_trace(go.Pie(values=df['label'].value_counts().to_numpy(), labels=df['label'].value_counts().index, marker=dict(colors=['lightblue','pink'])), row=1, col=2)

Let us now write the code for displaying fire and non-fire images.

def visualize_images(label, title):

data = df[df['label'] == label]

pics = 6 # Set the number of pics

fig, ax = plt.subplots(int(pics // 2), 2, figsize=(15, 15))

plt.suptitle(title)

ax = ax.ravel()

for i in range((pics // 2) * 2):

path = data.sample(1).loc[:, 'path'].to_numpy()[0]

img = image.load_img(path)

img = image.img_to_array(img) / 255

ax[i].imshow(img)

ax[i].axes.xaxis.set_visible(False)

ax[i].axes.yaxis.set_visible(False)

visualize_images('fire', 'Images with Fire')

visualize_images('non_fire', 'Images without Fire')

By displaying some sample images from both fire and non-fire categories we would get a sense of what our model will be working with.

We’re going to apply image addition ways to ameliorate our training data. Applying arbitrary image adaptations, similar as gyration, drone, and shear, is known as addition. By generating a more robust and different dataset, this procedure enhances the model’s capacity to generalize to new images.

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Conv2D, MaxPool2D, Flatten, Dense

generator = ImageDataGenerator(

rotation_range= 20,

width_shift_range=0.1,

height_shift_range=0.1,

shear_range = 2,

zoom_range=0.2,

rescale = 1/255,

validation_split=0.2,

)

train_gen = generator.flow_from_dataframe(df,x_col='path',y_col='label',images_size=(256,256),class_mode='binary',subset='training')

val_gen = generator.flow_from_dataframe(df,x_col='path',y_col='label',images_size=(256,256),class_mode='binary',subset='validation')

class_indices = {}

for key in train_gen.class_indices.keys():

class_indices[train_gen.class_indices[key]] = key

print(class_indices)

We can visualize some of the augmented images generated by our training set.

sns.set_style('dark')

pics = 6 # Set the number of pics

fig, ax = plt.subplots(int(pics // 2), 2, figsize=(15, 15))

plt.suptitle('Generated images in training set')

ax = ax.ravel()

for i in range((pics // 2) * 2):

ax[i].imshow(train_gen[0][0][i])

ax[i].axes.xaxis.set_visible(False)

ax[i].axes.yaxis.set_visible(False)

Our model will correspond of several convolutional layers, each followed by a maximum- pooling subcaste. Convolutional layers are the core structure blocks of CNNs, allowing the model to learn spatial scales of features from the images. Max- pooling layers help reduce the dimensionality of the point maps, making the model more effective. We will also add completely connected( thick) layers towards the end of the model. These layers help combine the features learned by the convolutional layers and make the final bracket decision. The affair subcaste will have a single neuron with a sigmoid activation function, which labors a probability score indicating whether the image contains fire. After defining the model armature, we’ll publish a summary to review the structure and the number of parameters in each subcaste. This step is important to insure that the model is rightly configured.

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Conv2D, MaxPool2D, Flatten, Dense

model = Sequential()

model.add(Conv2D(filters=32,kernel_size = (2,2),activation='relu',input_shape = (256,256,3)))

model.add(MaxPool2D())

model.add(Conv2D(filters=64,kernel_size=(2,2),activation='relu'))

model.add(MaxPool2D())

model.add(Conv2D(filters=128,kernel_size=(2,2),activation='relu'))

model.add(MaxPool2D())

model.add(Flatten())

model.add(Dense(64,activation='relu'))

model.add(Dense(32,activation = 'relu'))

model.add(Dense(1,activation = 'sigmoid'))

model.summary()Next, we’ll compile the model using the Adam optimizer and the binary cross-entropy loss function. The Adam optimizer is widely used in deep learning for its efficiency and adaptive learning rate. Binary cross-entropy is appropriate for our binary classification problem (fire vs. non-fire).

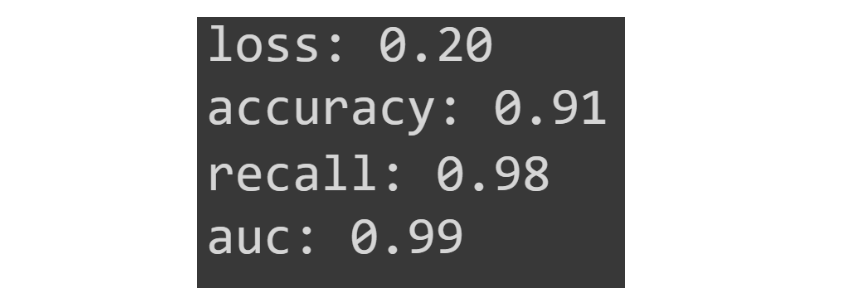

We’ll also specify additional metrics, such as accuracy, recall, and area under the curve (AUC), to evaluate the model’s performance during training and validation.

Callbacks are a powerful feature in TensorFlow that allows us to monitor and control the training process. We’ll use two important callbacks:

#Compiling Model

from tensorflow.keras.metrics import Recall,AUC

from tensorflow.keras.utils import plot_model

model.compile(optimizer='adam',loss='binary_crossentropy',metrics=['accuracy',Recall(),AUC()])

#Defining Callbacks

from tensorflow.keras.callbacks import EarlyStopping, ReduceLROnPlateau

early_stoppping = EarlyStopping(monitor='val_loss',patience=5,restore_best_weights=True)

reduce_lr_on_plateau = ReduceLROnPlateau(monitor='val_loss',factor=0.1,patience=5)

Model fitting refers to the process of training a machine learning model on a dataset. During this process, the model learns the underlying patterns in the data by adjusting its parameters (weights and biases) to minimize the loss function. In the context of deep learning, this involves several epochs of forward and backward passes over the training data.

model.fit(x=train_gen,batch_size=32,epochs=15,validation_data=val_gen,callbacks=[early_stoppping,reduce_lr_on_plateau])After training, we’ll evaluate the model’s performance on the validation set. This step helps us understand how well the model generalizes to new data. We’ll also visualize the training history to see how the loss and metrics evolved over time.

eval_list = model.evaluate(val_gen,return_dict=True)

for metric in eval_list.keys():

print(metric+f": {eval_list[metric]:.2f}")

eval_list = model.evaluate(val_gen,return_dict=True)

for metric in eval_list.keys():

print(metric+f": {eval_list[metric]:.2f}")

Finally, we’ll demonstrate how to use the trained model to predict whether a new image contains fire. This step involves loading an image, preprocessing it to match the model’s input requirements, and using the model to make a prediction.

We’ll download a sample image from the internet and load it using TensorFlow’s image processing functions. This step involves resizing the image and normalizing its pixel values.

Using the trained model, we’ll make a prediction on the loaded image. The model will output a probability score, which we’ll round to get a binary classification (fire or non-fire). We’ll also map the prediction to its corresponding label using the class indices.

# Downloading the image

!curl https://static01.nyt.com/images/2021/02/19/world/19storm-briefing-texas-fire/19storm-briefing-texas-fire-articleLarge.jpg --output predict.jpg

#loading the image

img = image.load_img('predict.jpg')

img

img = image.img_to_array(img)/255

img = tf.image.resize(img,(256,256))

img = tf.expand_dims(img,axis=0)

print("Image Shape",img.shape)

prediction = int(tf.round(model.predict(x=img)).numpy()[0][0])

print("The predicted value is: ",prediction,"and the predicted label is:",class_indices[prediction])

Developing an Deep Learning-based fire detection system like “Flame Guardian” exemplifies the transformative potential of Deep Learning in addressing real-world challenges. By meticulously following each step, from data preparation and visualization to model building, training, and evaluation, we have created a robust framework for detecting fire in images. This project not only highlights the technical intricacies involved deep learning but also emphasizes the importance of leveraging technology for safety and disaster prevention.

As we conclude, it’s evident that DL Model can significantly enhance fire detection systems, making them more efficient, reliable, and scalable. While traditional methods have their merits, the incorporation of Deep Learning introduces a new level of sophistication and accuracy. The journey of developing “Flame Guardian” has been both enlightening and rewarding, showcasing the immense capabilities of modern technologies.

A. “Flame Guardian” is a fire detection system that uses convolutional neural networks (CNNs) and TensorFlow to identify fire in images with high accuracy.

A. Early fire detection is crucial for preventing extensive damage, saving lives, and reducing the environmental impact of fires. Rapid response can significantly mitigate the devastating effects of both wildfires and structural fires.

A. Challenges include handling data variability (differences in color, intensity, and environment), minimizing false positives, ensuring real-time processing capabilities, and scalability to handle large datasets.

A. Image augmentation enhances the training dataset by applying random transformations such as rotation, zoom, and shear. This helps the model generalize better by exposing it to a variety of scenarios, improving its robustness.

A. The model is evaluated using metrics like accuracy, recall, and the area under the curve (AUC). These metrics help assess how well the model distinguishes between fire and non-fire images and its overall reliability.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.

Lorem ipsum dolor sit amet, consectetur adipiscing elit,

Great Research

Very Helpful !! Helped me develop my project